2021 January 22,

The first part of CS 311 develops, pretty much from scratch, a 3D graphics engine based on the triangle rasterization algorithm. Except where otherwise noted, all assignments are due at the start of the next class meeting. Except where otherwise noted, you are encouraged to work with one partner. When two people work together, they hand in just one copy of the work, that has both of their names on it.

We need to get certain tools set up on your computer, and we might run into problems, so we should get started immediately. Follow the setup instructions. If you have not completed these instructions by 11:59 PM on the first day of class, then e-mail me, so that we can figure out what needs to be done. You're welcome to work with a partner, but each student should end up with their own functioning setup.

While you're waiting for software to download and install, you might be able to work ahead...

This part of the homework is due at 11:59 PM on the first day of class. Read the syllabus. In a text editor (not a word processor), make a plain text file (not a Word, RTF, PDF, etc. document) containing your answers to the following questions. Each student should complete this questionnaire individually.

When you're done, follow these instructions to mount the COURSES file server, and drop your text file in your hand-in folder there. If you're off campus, then you might need to scroll down to the off-campus instructions. Warning: Once you hand in your file, you are no longer able to edit it.

The rest of the Day 01 work is due at the start of class on Day 02. You are encouraged, but not required, to work with a partner. If you would like help in finding a partner, then e-mail me. I enjoy helping. :)

000mainHelloWorld.c: Study this file, which means: Read it, follow the instructions for compiling and running it, figure out what each line of code does, and try variations if anything is unclear.

000mainIntsDoubles.c: Study this file.

000mainIfWhileFor.c: Study.

000mainFunctions.c: Study.

000pixel.h: Skim this file, which means: You should be familiar with its contents, but you are not expected to understand the details yet.

000pixel.c: Compile this file into 000pixel.o by following the instructions at the top. You are not expected to read or understand this file.

000mainLinking.c: Study.

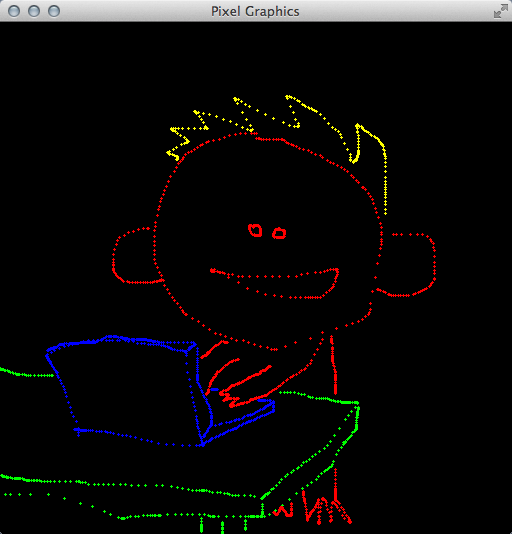

010mainPainting.c: Make a copy of 000mainLinking.c called "010mainPainting.c". Edit this file to make a simple painting program. Your program should make strokes of color whenever the user drags the mouse across the window. (Dragging means moving the mouse while holding down the left mouse button.) Your program should also let the user change the paint color by pressing the R, G, B, C, M, Y, K, and W (white) keys. To pass information among different parts of your user interface, you may have to use a few global variables. For example, here are some of mine, which I declare after the #include statements and before my first function definition:

double red = 1.0, green = 1.0, blue = 1.0; int mouseIsDown = 0;

You may add other features as you like. For example, my program lets the user clear the window and change the brush size. Here's a screenshot (click to enlarge):

Once your program is tested, make sure that your code is clean and commented. If you are working with a partner, then both names should be listed in a comment near the top of the file. Make sure that both of you have a copy of your work for future reference. But only one of you places your 010mainPainting.c into her or his hand-in folder on COURSES, by the start of class on Day 02.

If you have more time, then feel free to work ahead. Start with 000mainArrays.c, 000vectors.pdf, and 030vector.c from the next block of homework. If you have even more time, work on 000matrices2By2.pdf, 000mainMatrices2By2.c, and 030matrix.c from the block after that.

Today we start implementing our rasterization-based graphics engine. So your first programming task is to write the rasterizer. It is important that you carefully write and thoroughly test this code, because the next three or four weeks of the course depend on it.

020triangle.c: Make this file. In it write the following function to rasterize a triangle. The inputs are three vertices of a triangle and an RGB color. Assume that the vertices are not co-linear. Assume that they are given in counter-clockwise order. Make no other assumptions. For each pixel in the triangle, the function calls pixSetRGB with the given RGB color. Write helper functions if you wish.

void triRender(

double a0, double a1, double b0, double b1, double c0, double c1,

double r, double g, double b) {

}

020mainRasterizing.c: Make this file. Near the top, but after all header files, #include 020triangle.c to include your rasterizer code. In the main function, demonstrate that your rasterizer works. Here's a screenshot of mine:

Make sure that 020triangle.c and 020mainRasterizing.c are functioning, clean, and commented. In comments near the tops of the files, make sure that both students in your partnership are credited. Make sure that both students have copies of these files for future reference. Then just one student places the file in her or his hand-in folder on COURSES.

000mainArrays.c: Study.

000vectors.pdf: Study.

030vector.c: Some of these vector functions are already written. Implement the others. (We add to this file in the coming weeks.)

If you have more time, then work on 000matrices2By2.pdf, 000mainMatrices2By2.c, and 030matrix.c from the next block of homework.

Today we add the ability to interpolate quantities across triangles. After rasterization, interpolation is the second-most fundamental concept in our graphics engine. It enables a wide variety of effects — far beyond what we see today.

030triangle.c: Make a copy of your 020triangle.c. In it, alter your rasterizer to use C arrays wherever appropriate. For starters, the interface of the triRender function should be changed as follows. (In the implementation, a0 becomes a[0], etc.)

void triRender(

const double a[2], const double b[2], const double c[2],

const double rgb[3])

030mainInterpolating.c: In a copy of 020mainRasterizing.c, include 030triangle.c rather than 020triangle.c. Update your demonstration code to make sure that the new triRender works, before proceeding to the next steps.

000matrices2By2.pdf: Study.

000mainMatrices2By2.c: Study.

030matrix.c: This file specifies the interfaces for various matrix functions. Fill in the implementations. (We add to this file in the coming weeks.)

030mainTest.c: Without editing this program at all, you should be able to compile and run it. It should show a large yellow triangle slowly rotating. If you encounter any errors, then something is wrong in your 030vector.c, 030matrix.c, and/or 030triangle.c.

030triangle.c: Implement linear interpolation of color. The interface for triRender is now

void triRender(

const double a[2], const double b[2], const double c[2],

const double rgb[3], const double alpha[3], const double beta[3],

const double gamma[3])

where the three new parameters are RGB colors. Modulate the interpolated color with the uniform color rgb. Where appropriate, use functions from 030vector.c and 030matrix.c. (But don't include those files here. They are included in 030mainInterpolating.c.)

030mainInterpolating.c: Include 030vector.c, 030matrix.c, and 030triangle.c in that order. Demonstrate that your color interpolation works. Here's a screenshot of mine:

You have four files to hand in: 030vector.c, 030matrix.c, 030triangle.c, and 030mainInterpolating.c. As usual, check that your files are functioning, clean, and commented, both partners are credited, and both partners have copies. Then submit one copy of the files to COURSES.

If you have more time, then work on 000mainPointers.c and 000mainStructs.c from the next block of homework.

Interpolation is used to implement a bunch of techniques other than blending colors. The first of these techniques is texture mapping.

000mainPointers.c: Study.

000mainStructs.c: Study.

stb_image.h: Download. This is a public-domain image-loading library. Despite the ".h" suffix, it contains not just interfaces but also implementations. You are not expected to read it or even skim it. Parts of it are hard to understand unless you know C well. This library is used by a certain function in 040texture.c, which I have already written for you.

040texture.c: Skim this file, which means: You should be familiar with all of the constants, structs, and functions, but you may not understand the details of some of the function implementations. This file defines a data type, texTexture, that we use for texture mapping. It's all complete, except that the texSample function is missing one key feature: When asked for linear filtering, it responds with nearest-neighbor filtering. You fill in that feature later in this assignment. For now, just pretend that linear filtering is working.

040triangle.c: In a copy of 030triangle.c, update triRender to have the following interface. The quantities being interpolated are now texture coordinates, not colors. Just before you call pixSetRGB, perform a texture lookup using texSample. Modulate that texture RGB by rgb. Pass that modulated color to pixSetRGB.

void triRender(

const double a[2], const double b[2], const double c[2],

const double rgb[3], const texTexture *tex, const double alpha[2],

const double beta[2], const double gamma[2])

040mainTexturing.c: In a copy of 030mainInterpolating.c, include 040texture.c and demonstrate your new triRender. Of course, somewhere you'll have to make a texTexture texture to pass to triRender. Use texInitializeFile, and don't forget to texDestroy at the end. Depending on the image that you use, the texel dimension might be 1, 3, 4, or some other value. Whatever it is, use the texture in whatever (non-trivial) way you want. If your results look wrong, and you don't know why, then try a different image. Here's a screenshot of my program, which uses a colorized photograph of one of my favorite mathematicians:

040texture.c: Now let's implement linear filtering. Find the relevant part of texSample, and replace it with your own linear filtering code. Do not assume that the texels are RGB colors. Instead assume that they are arrays of dimension tex->texelDim.

040mainTexturing.c: Add a keyboard handler, so that by pressing GLFW_KEY_ENTER the user can quickly switch between nearest-neighbor and linear filtering. It will help you and the grader evaluate your filtering. If you cannot see much of a difference, then try using a coarser image (fewer pixels wide and tall).

In the usual way, clean up 040mainTexturing.c and all of the files on which it depends: 040texture.c, 040triangle.c, and whatever image you're using. Share them with your partner, and submit them to COURSES.

Today we abstract a crucial part of our code. This abstraction makes our graphics engine much more flexible. It also aligns our engine better, with how graphics is done in the real world.

050shading.c: Create this file. In it, define a struct data type, following 000mainStructs.c, called shaShading. Right now it just has three int members: unifDim, attrDim, and texNum. (Later in the course it holds more information.)

050mainAbstracted.c: Study this file, but you will not be able to run it yet, because of three issues. The first issue is that you haven't written the colorPixel function. The second issue is that you don't have 050triangle.c. The third issue is that your image file might not have the same name as mine. Let's deal with these issues in reverse order. In main, change the name of the image file to whatever texture file you prefer.

050triangle.c: Make a copy of 040triangle.c. In it, update the interface for triRender to the one below. You now have an array of uniform information, an array of texture pointers, three arrays of vertex attributes, and a shaShading struct that specifies how many elements are in each of those arrays. Edit your implementation so that, at each rasterized pixel, it computes an interpolated attribute array attr of dimension sha->attrDim, invokes colorPixel to get an RGB color, and passes this color to pixSetRGB. (Let me emphasize that pixSetRGB is called from triRender, not from colorPixel.)

/* Assumes that the 0th and 1th elements of a, b, c are the 'x' and 'y'

coordinates of the vertices, respectively (used in rasterization, and to

interpolate the other elements of a, b, c). */

void triRender(

const shaShading *sha, const double unif[], const texTexture *tex[],

const double a[], const double b[], const double c[])

050mainAbstracted.c: Now write colorPixel. It should modulate a texture color, interpolated color, and uniform color all together. Here's mine:

060mainEffect.c: Make a copy of 050mainAbstracted.c. Edit it to produce an entirely different visual effect. Be creative. My only requirement is that you must use at least two textures. The most interesting edits are in colorPixel, but there may be some supporting edits throughout 060mainEffect.c. The rest of your files, such as 050triangle.c and 050shading.c, should be unchanged. If you want me to share your demo with the class, then e-mail me a screenshot and a brief (one or two sentences) explanation of how you made your effect. For example, mine is below. I use the texture coordinates to sample an RGB from a cloud texture, then use the RG from that RGB as texture coordinates to sample the final RGB from my usual Emmy Noether texture.

In the usual way, clean up 050mainAbstracted.c, 060mainEffect.c, and all of the files on which they depend. Share them with your partner, and submit them to COURSES.

We're done drawing single 2D triangles. Now we start to draw meshes made of many 2D triangles. Also we introduce the vertex shader abstraction.

070mesh.c: Skim. This file implements a data type for triangular meshes. You can see initializer functions, accessor functions, a destroyer function, and functions to read and write files. There's also meshRender. Fill in its implementation, using other functions such as meshGetTrianglePointer, meshGetVertexPointer, and triRender. Don't overlook the part of the specification about attrDim.

070mesh2D.c: Skim. This file offers "convenience" functions for building some common kinds of 2D meshes.

070mainMesh.c: Include 070mesh.c and 070mesh2D.c after 050triangle.c. Demonstrate that your mesh renderer is working correctly. I recommend using the convenience builders and one texture.

Now we make a big change to our graphics engine. It requires alterations to several files (because our engine is not very well abstracted, because we're learning how to abstract it right now). The change is: What we used to call attributes are now either attributes or varyings, depending on whether they exist before or after the vertex transformation.

080shading.c: Add an int varyDim member to the shading struct.

080triangle.c: Your triangle code will never again see an attribute vector. It will see varying vectors instead. Make all required changes. For example, replace sha->attrDim with sha->varyDim. When I did this, my code had a bunch of variable names and comments that referred to attributes, so I took this opportunity to clean those up too.

080mainPosable.c: Because colorPixel is called only by triRender, and triRender sees varyings not attributes, it follows that colorPixel sees varyings not attributes. So, in colorPixel, replace attr with vary, ATTRX with VARYX, etc. Just below colorPixel, add the transformVertex function below. Above colorPixel, keep the constants ATTRX, etc., but add constants VARYX, etc. And don't forget to initialize the varyDim member of your shading struct in main.

/* Outputs vary, based on the other parameters, which are unaltered. Like

colorPixel, this function should not access any variables other than its

parameters and any local variables that it declares. */

void transformVertex(

int unifDim, const double unif[], int attrDim, const double attr[],

int varyDim, double vary[]) {

/* For now, just copy attr to vary. Baby steps. */

vary[VARYX] = attr[ATTRX];

vary[VARYY] = attr[ATTRY];

vary[VARYS] = attr[ATTRS];

vary[VARYT] = attr[ATTRT];

}

080mesh.c: In meshRender, use transformVertex to transform attributes to varyings before passing them to triRender. Perhaps you can imagine a couple of ways to do this. I recommend that you pursue the simplest, easiest option — for now, at least.

080mainPosable.c: Test whether everything works up to this point. Then add one uniform to express rotation and two uniforms to express translation. Implement the rotation followed by translation in transformVertex. I recommend that you have a time step handler that animates the rotation and/or translation, because animations give you more debugging information than still images do. Here's a still image of my program:

Clean up and hand in 080mainPosable.c and its dependencies. (You are not required to hand in 070mainMesh.c.)

Today's work doesn't really add any new features. Instead, it organizes, accelerates, and stress-tests the code that we already have. That's valuable work, because we're about to build a 3D graphics engine atop this 2D foundation, and we want the foundation to be as strong as possible.

Together, the vertex and fragment shaders constitute a single shader program. A shaShading struct should describe a complete shader program — including the functions. For then we can conveniently switch shader programs at run time. (A real graphics program might use several shader programs in each animation frame.)

000mainFunctionPointers.c: Study.

090shading.c: Add colorPixel and transformVertex members to the shading struct. They are function pointers.

090triangle.c: Replace colorPixel with sha->colorPixel.

090mesh.c: Replace transformVertex with sha->transformVertex.

090mainAbstracted.c: Study this file, focusing on the two comments that describe crucial changes. Change the name of the image but nothing else in this file. Test that it works with the rest of your code. If not, then fix the rest of your code, not this file.

The next issue is that our approach to writing transformVertex is inefficient (why?), and it will become more inefficient as the course progresses. So let's take this opportunity to restructure the code a bit.

000matrices3By3.pdf: Study.

100matrix.c: In a copy of 030matrix.c, implement the following functions.

/* Multiplies the 3x3 matrix m by the 3x3 matrix n. The output CANNOT safely

alias the input. */

void mat333Multiply(

const double m[3][3], const double n[3][3], double mTimesN[3][3])

/* Multiplies the 3x3 matrix m by the 3x1 matrix v. The output CANNOT safely

alias the input. */

void mat331Multiply(

const double m[3][3], const double v[3], double mTimesV[3])

/* Builds a 3x3 matrix representing 2D rotation and translation in homogeneous

coordinates. More precisely, the transformation first rotates through the angle

theta (in radians, counterclockwise), and then translates by the vector t. */

void mat33Isometry(double theta, const double t[2], double isom[3][3])

100mainHomogeneous.c: Make a copy of 090mainAbstracted.c. Replace the three rotation-translation uniforms with nine uniforms. They store a 3x3 matrix. In your time step handler, configure this matrix using code like the code below. The rotation angle and translation vector are global variables, one or both of which is altered by the time step handler. UNIFMODELING is a compile-time constant storing the index of the first (0th) entry of the matrix in the uniforms. You are not expected to fully understand the pointer manipulation in the third line of code. Intuitively, it packs a 3x3 matrix into a 9-dimensional vector.

double isom[3][3]; mat33Isometry(rotationAngle, translationVector, isom); vecCopy(9, (double *)isom, &unif[UNIFMODELING]);

100mainHomogeneous.c: In transformVertex, you can "unpack" the 9-dimensional vector back into a 3x3 matrix and use it as follows. You have to figure out what attrHomog and varyHomog are, and how they fit into the rest of your code. Once you're done, test your program. Its output should be slightly faster than, but otherwise identical to, that of 090mainAbstracted.c.

mat331Multiply((double(*)[3])(&unif[UNIFMODELING]), attrHomog, varyHomog);

Let's make a serious test of everything up to this point, so that we can be confident in moving forward to 3D.

110mainShadings.c: Make a demo program that renders (at least) two meshes with two different shading structs. To make the shading structs genuinely different, let's say that they should have different shader functions attached. They may also differ in their integer members. If you want me to share your demo with the class, then e-mail me a screenshot and a short description. Screenshots of two examples are below. In the first example, the body of the spaceship is a square texture-mapped with one texture. The exhaust plume is a triangle with no texture. The exhaust plume fades in and out, depending on whether the user is firing the spaceship's main rocket. The second example is a Sokoban clone with a psychedelic animated background. (These are prototypes of video games. Your program does not have to be interactive at all.)

Clean up and hand in 110mainShadings.c and its dependencies. (You are not required to hand in 090mainAbstracted.c or 100mainHomogeneous.c.)

Today we transition from 2D to 3D. This change requires some math, which you will implement. It requires 3D meshes, which I have implemented for you. It requires numerous other small changes, as we discuss in class.

000vectorsMore.pdf: Study.

120vector.c: In a copy of 030vector.c, implement the following functions.

/* Returns the dot product of the vectors v and w. */ double vecDot(int dim, const double v[], const double w[]) /* Returns the length of the vector v. */ double vecLength(int dim, const double v[]) /* Returns the length of the vector v. If the length is non-zero, then also places a normalized (length-1) version of v into unit. The output can safely alias the input. */ double vecUnit(int dim, const double v[], double unit[]) /* Computes the cross product of v and w, and places it into vCrossW. The output CANNOT safely alias the input. */ void vec3Cross(const double v[3], const double w[3], double vCrossW[3]) /* Computes the vector v from its spherical coordinates. rho >= 0.0 is the radius. 0 <= phi <= pi is the co-latitude. -pi <= theta <= pi is the longitude or azimuth. */ void vec3Spherical(double rho, double phi, double theta, double v[3])

000matrices4By4.pdf: Study.

120matrix.c: In a copy of 100matrix.c, implement the following functions. You might find it useful to implement helper functions such as mat33Add. That's up to you.

/* Given a length-1 3D vector axis and an angle theta (in radians), builds the

rotation matrix for the rotation about that axis through that angle. */

void mat33AngleAxisRotation(

double theta, const double axis[3], double rot[3][3])

/* Given two length-1 3D vectors u, v that are perpendicular to each other.

Given two length-1 3D vectors a, b that are perpendicular to each other. Builds

the rotation matrix that rotates u to a and v to b. */

void mat33BasisRotation(

const double u[3], const double v[3], const double a[3],

const double b[3], double rot[3][3])

/* Multiplies m by n, placing the answer in mTimesN. The output CANNOT safely

alias the input. */

void mat444Multiply(

const double m[4][4], const double n[4][4], double mTimesN[4][4])

/* Multiplies m by v, placing the answer in mTimesV. The output CANNOT safely

alias the input. */

void mat441Multiply(

const double m[4][4], const double v[4], double mTimesV[4])

/* Given a rotation and a translation, forms the 4x4 homogeneous matrix

representing the rotation followed in time by the translation. */

void mat44Isometry(

const double rot[3][3], const double trans[3], double isom[4][4])

120mesh3D.c: Skim. This file contains "convenience" functions for building 3D meshes. The attribute dimensions are 3 + 2 + 3 = 8. The first three attributes are X, Y, Z position. The next two attributes are S, T texture coordinates. We talk about the last three attributes later in the course. For now, all you need to know is that they are often called N, O, P.

120triangle.c: Now that we're working in 3D, it is possible that triRender will be passed a co-linear or clockwise-ordered triangle. When either of those happens, triRender should draw nothing. My code has always (since 030triangle.c) done this backface culling automatically, by checking that the determinant returned by mat22Invert is positive. If you've been doing the same, then 120triangle.c is just a copy of 090triangle.c. If you've not been doing the same, then you need to implement this feature now. The details depend on how your triRender code is structured. For example, some students reorder the vertices into clockwise order, which their algorithm then rasterizes. They might consider a cross product test before reordering, instead of my determinant test after reordering. If you don't know what I'm talking about, then re-read 000vectorsMore.pdf.

120main3D.c: Alter the name of the image file, but make no other alterations. Study. The program should display a rotating 3D box as in the screenshot below. If not, fix your other files. (I made this file by starting from 100mainHomogeneous.c and making many small edits as described in class.)

Clean up and hand in 120main3D.c and its dependencies.

Today we add depth buffering to our graphics engine. Unfortunately, this feature requires edits to many files. Roughly speaking, my instructions work from the deepest part of the code (the shaders) up to the shallowest part (the main function).

130depth.c: Skim.

130mainDepth.c: Start with a copy of 120main3D.c. Enlarge your varyings so that they convey Z immediately after X and Y. Update the shading struct's varyDim and the transformVertex function accordingly. Update colorPixel to have the following interface. The function outputs not just an RGB color but also a depth. At this point in the course, the depth should be the negation of the varying Z coordinate.

void colorPixel(

int unifDim, const double unif[], int texNum, const texTexture *tex[],

int varyDim, const double vary[], double rgbd[4])

130shading.c: Update the colorPixel member's type to match that function's new interface.

130triangle.c: Here's the interesting part. The new interface for triRender is shown below. Deep in its algorithm, it receives an RGBD value from colorPixel. Use it.

void triRender(

const shaShading *sha, depthBuffer *buf, const double unif[],

const texTexture *tex[], const double a[], const double b[],

const double c[])

130mesh.c: Because triRender is called from meshRender, and triRender needs a depth buffer, we have to update meshRender as follows.

void meshRender(

const meshMesh *mesh, depthBuffer *buf, const shaShading *sha,

const double unif[], const texTexture *tex[])

130mainDepth.c: In the appropriate places, declare, initialize, and destroy a depth buffer. On each animation frame, around the time that you clear the RGB buffer, also clear the depth buffer. Use the new meshRender. Also make various other required changes. I recommend that you inspect every line of code in this file. Test your program. The easiest way to make a strong test is to use two meshes that intersect. Here's mine:

The point of depth buffering is to get objects to occlude correctly. The point is not to improve the code's speed. However, we get a speed improvement as a bonus.

130triangle.c: What happens if a triangle crosses the boundary of the window, so that some of its pixels are outside the window? Well, pixSetRGB ignores requests to paint those pixels, so your image is unharmed. But your rasterizer still wastes time trying to paint them. For the first time in the course, you can now optimize-out this wasted time. How? Do it.

130mainDepth.c: Test. You should get exactly the same output as you did before. To see the effects of the optimization, try using a mesh, whose triangles cross the window boundaries. (In my test, the optimization quintupled my frame rate. Your results may vary.)

Clean up and hand in 130mainDepth.c and its dependencies.

Today's homework is a bit short, and the next day's homework is a bit long. Consider getting started on it. You know everything that you need to know for the first section.

This assignment is due at the start of class on Day 12 (because we have an exam on Day 11.) We implement camera positioning, orthographic projection, and the viewport transformation. These changes make the relationship between world space and screen space more flexible, so that the users of our software can think about the 3D world that they want to build, instead of the screen on which it is displayed.

140matrix.c: Implement the following functions. The first one is easy. The second one could be done by transposing and then multiplying, but try to make it faster than that. Mine is exactly as fast as mat331Multiply.

/* Sets its argument to the 4x4 zero matrix (which consists entirely of 0s). */

void mat44Zero(double m[4][4])

/* Multiplies the transpose of the 3x3 matrix m by the 3x1 matrix v. To

clarify, in math notation it computes M^T v. The output CANNOT safely alias the

input. */

void mat331TransposeMultiply(

const double m[3][3], const double v[3], double mTTimesV[3])

140isometry.c: Study. As the course proceeds, isometries become increasingly important to us. So they're worth turning into a "class", like meshes and textures already are. That's what this file does. There are two un-implemented "methods". Implement them.

140mainCamera.c: Start with a copy of 130mainDepth.c, so that non-trivial scene objects are being rendered. In the main function, write non-trivial tests for the two isometry methods that you just implemented, to verify that they are working.

140camera.c: Study. This file defines a camera class with a "has-a" relationship to the isometry class. Two of its methods are not yet implemented. We implement them later in this assignment.

140mainCamera.c: Delete the method tests that you just made. Declare a global camera variable. Right now we focus on its isometry; don't worry about its projection. Add a 4x4 matrix of uniforms to hold a homogeneous transformation for the camera. In some user interface handler, use camLookAt to revolve the camera around the scene objects. For example, you could revolve the camera continually in the time step handler or discretely in response to the user's pressing keys. The camLookAt function updates the camera's isometry; you then put the inverse of that isometry into the camera's 4x4 uniform matrix. In transformVertex, apply this transformation after the modeling isometry. Test. (In my version of this program, the scene objects appear in the lower-left corner of the window. That's reasonable and not any kind of error. Why?)

It's tempting to combine the projection and the viewport into a single matrix that takes effect inside the vertex shader. But later we will need the viewport to happen after the vertex shader. So we structure the code in that way.

000matrices4By4More.pdf: Study.

140matrix.c: Implement the following functions.

/* Builds a 4x4 matrix for a viewport with lower left (0, 0) and upper right (width, height). This matrix maps a projected viewing volume [-1, 1] x [-1, 1] x [-1, 1] to screen [0, w] x [0, h] x [0, 1] (each interval in that order). */ void mat44Viewport(double width, double height, double view[4][4]) /* Inverse to mat44Viewport. */ void mat44InverseViewport(double width, double height, double view[4][4])

140mainViewport.c: Start with a copy of 140mainCamera.c. Enlarge the varyings so that they begin with XYZW instead of just XYZ. At this point of the course, W is always 1. Test. The program should produce the same visuals as it did before this change.

140mesh.c: Perform the viewport transformation here, just after the vertex shader. So that it has access to the viewport, meshRender now has this interface:

void meshRender(

const meshMesh *mesh, depthBuffer *buf, const double viewport[4][4],

const shaShading *sha, const double unif[], const texTexture *tex[])

140camera.c: Implement the two un-implemented methods. In the latter, assume for now that the camera is always in orthographic mode. (We remove this assumption in the next assignment.)

On paper: Design a 3D scene. Figure out which objects you'll have (a sphere, a box, etc.?), how big they will be (radius 1, etc.?), where they will be in space (near the origin?), where your camera will be, how it will be aimed, and how big its viewing volume will be. Rig everything so that the objects will appear near the center of the screen, when they are eventually rendered.

140mainViewport.c: Incorporate the viewport and projection. The projection can be combined with the inverse camera isometry into a single uniform 4x4 matrix. You'll have to configure it using some combination of camSetProjection, camSetProjectionType, camSetFrustum, etc. In the fragment shader, the depth should now equal the varying Z (because the negation, which we used to have here, is implicit in the projection). Test. If you are seeing a solid black screen, then there could be an error in your math, but the cause could also be that your camera is pointed away from your scene objects or that your viewing volume is too small. That's why planning on paper is helpful. Here's a screenshot of mine:

Clean up and hand in 140mainViewport.c and its dependencies.

There is no new homework today, because of the exam. Finish the Day 10 homework by the start of class on Day 12.

Today we implement perspective projection. It's pretty easy, except that it leads to other issues (clipping, perspective-corrected interpolation) that are not easy.

150camera.c: Implement the following functions. Also, alter camGetProjectionInverseIsometry so that it works whether the camera's projection type is orthographic or perspective.

/* Builds a 4x4 matrix representing perspective projection. The viewing frustum is contained between the near and far planes, with 0 > near > far. On the near plane, the frustum is the rectangle R = [left, right] x [bottom, top]. On the far plane, the frustum is the rectangle (far / near) * R. Maps the viewing volume to [-1, 1] x [-1, 1] x [-1, 1], with far going to 1 and near going to -1. */ void camGetPerspective(const camCamera *cam, double proj[4][4]) /* Inverse to the matrix produced by camGetPerspective. */ void camGetInversePerspective(const camCamera *cam, double proj[4][4])

150mesh.c: Perform the homogeneous division, either immediately before the viewport transformation or immediately after it. You may assume that W is non-zero. (At this point in the course, W might be zero in rare cases. And then your program might malfunction. That's okay for now.)

150landscape.c: Skim. This file helps us make more complicated scenes, so that we can strenuously examine how perspective behaves. (Also, I'm tired of simple shapes.)

150mainPerspective.c: Near the start of main is a chunk of code that is supposed to randomly generate a landscape. Complete the code using functions from 150landscape.c. Then the program should work. If the rendering is unbearably slow, then decrease LANDSIZE. Try all of the keyboard controls. Move the camera so that it views the landscape from a shallow angle — as if it were in a low-flying aircraft. In this situation, switching between orthographic and perspective projections should produce a strong visual effect. Your program should work perfectly when all scene objects are in front of the camera. On the other hand, undesirable artifacts (and slow speeds, and maybe crashes) should arise when the camera is perspective and objects are at or behind the camera plane. For example, the first image below is fine. I push the camera forward slightly to get the second image, which is fine. I push the camera forward slightly again to get the third image, which is terribly wrong.

160mesh.c: Here is the interesting part. Implement the near-plane clipping algorithm discussed in class. Shall I refresh your memory? The algorithm happens in normalized device coordinates — that is, after projection and before viewport or homogeneous division. A vertex a is clipped if either a3 ≤ 0 or a3 < -a2. If all three vertices in a triangle are clipped, then the entire triangle is clipped. If two vertices are clipped, then the triangle is clipped to a smaller triangle. If one vertex is clipped, then the triangle is clipped to two smaller triangles. If no vertices are clipped, then the triangle is not clipped at all. The vertices of the smaller triangles are found by linear interpolation. More specifically, suppose that vertex a is clipped and vertex b is not. Then a + t (b - a), with t = (a2 + a3) / (a2 + a3 - b2 - b3), is where the near plane intersects the triangle side from a to b. This interpolation applies to not just the XYZW coordinates but rather the entire varying vector.

160mainClipping.c: In a copy of 150mainPerspective.c, switch 150mesh.c to 160mesh.c and test again. There should be no artifacts or crashes when objects cross the camera plane. Instead, they should appear clipped where they cross the near plane. Both perspective and orthographic projections should work.

Clean up and hand in 150mainPerspective, 160mainClipping.c, and their dependencies.

Today's assignment is intentionally short. The first part of the assignment is not large, and the second part is optional. Perhaps students who are behind the schedule can catch up :).

170mesh.c: Implement perspective-corrected interpolation as we discussed in class. Formerly you performed the homogeneous division on components 0, 1, 2, 3 of the varying vector. Now you perform homogeneous division on the entire varying vector except for component 3. Component 3 inverts from W to its reciprocal 1 / W.

170mainInterpolating.c: Make a demo program that uses at least one texture. In the fragment shader, divide the extra varyings (the texture coordinates) by the interpolated 1 / W, to cancel out the effect of dividing them by W in the mesh renderer. Test. Your textures should not suffer from the bending problem, which is illustrated in the top of the following screenshot.

Clean up and hand in 170mainInterpolating.c and its dependencies. (And here's a study question to ponder: In the screenshot above, both cubes are drawn using the perspective-corrected 170mesh.c. But the shaders on the top cube are rigged to undo the correction. How?)

Congratulations! Our first 3D graphics engine is complete. It might not seem complete, because we have made only rudimentary images with it. However, a wide variety of features, effects, and applications can be implemented using what we have now.

Let's take this opportunity to package our graphics engine for posterity. This packaging has several benefits. First, we can release a binary version without giving away the source code. Second, we can hide the implementation of the engine from its users, so that they use only the interfaces. Third, those users do not need to re-compile the graphics engine every time they compile their programs. (In a small project like this, the compilation time is small. In larger projects, the time can be huge.) Fourth, the users need to include just one header file instead of 10 or more C files.

180engine.h: Skim. This header file specifies public interfaces without committing to any details about implementation. For example, the functions in 130triangle.c are not listed, because they are not intended for public use. If you have some other functions that you want to make public, then feel free to add them. But don't remove or rename any functions that are there.

180engine.c: Compile following the directions at the top of the file. The resulting 180engine.o is the binary version of our graphics engine.

180mainEngine.c: Start with a copy of 160mainClipping.c. Edit it to #include 180engine.h but none of our other engine files. At the top, change the compilation instructions, so that the user knows how to link to 180engine.o. Edit the fragment shader (why and how?). Test that the program works correctly in both orthographic and perspective.

If you ever wanted to play around with our graphics engine — try a weird effect, make better art, etc. — then now would be the time. For example, I added a textured sky (how?), a grass texture, and untextured shrubbery. Then I added a mirror effect to the water (how?) and a blur effect to the mirror. These features were added in main.c; no edits to the engine were required. There are some undesirable artifacts where the water meets the land, but I'm calling it done.